AI Vibe Coding: Why I Let AI Write Both My Code AND Tests

Apr 18, 2025 · 3 min read

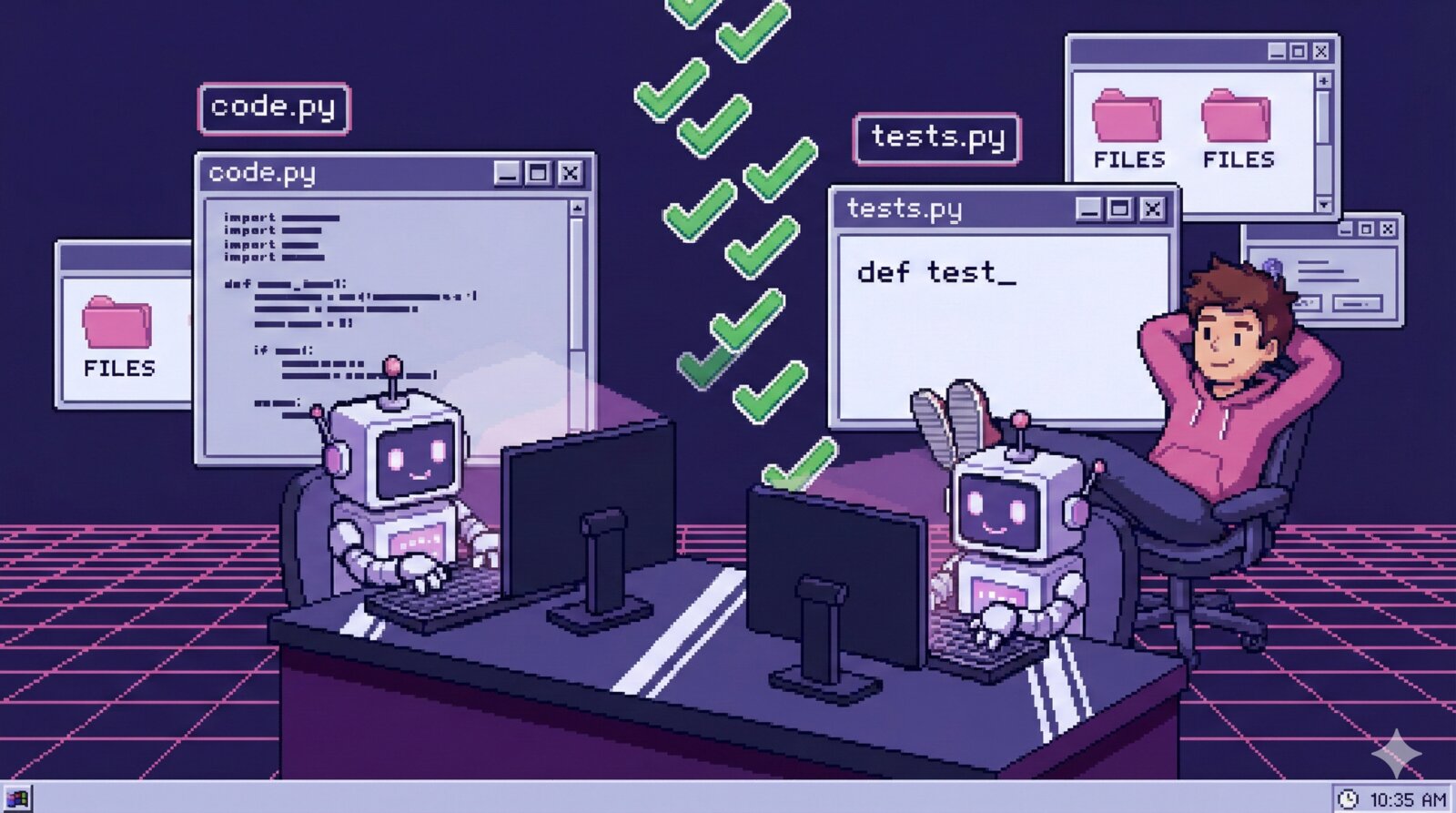

I let AI write both my code AND tests, and the results demolished my expectations.

There’s a common objection to AI-generated code: “How do you know it’s correct?” The usual answer involves careful code review, which is valid but incomplete.

Here’s my approach: Let AI write the tests too.

The Initial Skepticism

When I first tried this, I was skeptical. If AI writes broken code, won’t it just write tests that pass for that broken code?

The surprising answer is: not usually. Here’s why.

Different Prompting, Different Assumptions

When you ask AI to implement a feature, it makes certain assumptions about the requirements. When you ask it to write tests for that feature, it approaches the problem fresh, often making different assumptions.

This mismatch is actually valuable. The tests often catch edge cases the implementation missed.

Example: I asked Claude to implement a function that processes user input. The implementation handled the happy path well. When I asked for comprehensive tests, it generated tests for:

- Empty strings

- Unicode characters

- Extremely long inputs

- Inputs with special characters

Several of these tests failed, revealing bugs I wouldn’t have thought to look for.

The Django Sweet Spot

This approach works especially well with Django because of its excellent test infrastructure:

class ContactAPITests(TestCase):

def setUp(self):

self.client = Client()

self.user = User.objects.create_user(

username='testuser',

email='test@example.com',

password='testpass123'

)

def test_create_contact_authenticated(self):

self.client.login(username='testuser', password='testpass123')

response = self.client.post('/api/contacts/', {

'name': 'John Doe',

'email': 'john@example.com'

})

self.assertEqual(response.status_code, 201)

self.assertTrue(Contact.objects.filter(email='john@example.com').exists())

def test_create_contact_unauthenticated(self):

response = self.client.post('/api/contacts/', {

'name': 'John Doe',

'email': 'john@example.com'

})

self.assertEqual(response.status_code, 401)

Django’s TestCase, Client, and ORM make tests readable and fast to write. AI knows these patterns deeply.

My Workflow

Here’s how I actually use this:

- Describe the feature to Claude (what it should do, edge cases I know about)

- Have Claude implement it (usually gets 80% right on first pass)

- Ask Claude for comprehensive tests (“Write Django tests that cover happy paths, edge cases, and error conditions”)

- Run the tests (

python manage.py test) - Fix what fails (often with Claude’s help)

The tests act as a specification. When they fail, I learn something about either the implementation or my requirements.

Real Numbers

From my last month of development on ContactAI:

- ~200 AI-generated tests

- ~15% failed on first run (revealing real bugs)

- 3 tests had issues in the tests themselves (easily fixed)

- Total time saved: significant (these tests would have taken days to write manually)

The Trust But Verify Model

I don’t blindly trust AI-generated tests. I review them for:

- Coverage. Are the important paths tested?

- Assertions. Are they actually checking the right things?

- Setup. Is the test data realistic?

- Independence. Do tests depend on execution order?

But this review is faster than writing tests from scratch. And it catches the obvious issues.

When This Doesn’t Work

This approach has limitations:

- Complex business logic. When requirements are nuanced, you need to specify carefully.

- Integration with external services. Mocking strategies need human judgment.

- Performance tests. AI doesn’t know your scale requirements.

- Security tests. Critical paths need expert review.

For these cases, I write the test specifications myself and have AI implement them.

The Productivity Multiplier

Here’s what surprised me most: AI-generated tests make AI-generated code better.

When AI knows tests will be written, it seems to generate more defensive code. When I’m iterating with failing tests, the feedback loop is tight and productive.

The combination of AI-generated code AND tests isn’t double the risk. It’s a productivity multiplier.

Try This Tomorrow

If you’re skeptical, try this experiment:

- Pick a small feature you need to build

- Have AI implement it

- Have AI write tests for it

- Run the tests

- Note what fails

I bet you’ll find real bugs. And you’ll have tests you can run forever.

The “vibe coding” critique assumes AI code goes untested. Test-driven AI coding is a different game entirely.