LLMs and the Control Flow Problem: Why AI Agents Fail at the Unexpected

Dec 18, 2025 · 3 min read

OpenAI-style transformers hit 99.7% accuracy on a problem harder than most benchmarks, and the reason why exposes a flaw in every AI agent today.

The Math Misconception

There’s a common narrative: “LLMs are bad at math.” People point to failures on arithmetic and conclude that language models lack numerical reasoning.

But here’s what’s strange: LLMs can solve incredibly complex mathematical reasoning problems. They can prove theorems, derive equations, and work through multi-step proofs.

So what’s actually going on?

It’s Not Math. It’s Control Flow.

The insight from recent research is that LLMs don’t struggle with math. They struggle with control flow.

Control flow means:

- Loops that run an arbitrary number of times

- Branches that depend on runtime values

- Recursion with variable depth

- State that accumulates across steps

These are things that traditional computers are perfect at. You write a loop, it executes exactly as many times as needed. No matter if that’s 3 times or 3 million.

Transformers don’t work this way. They process fixed-length sequences in fixed-depth passes. There’s no native loop construct. Every “iteration” has to be somehow encoded in the forward pass.

Why This Matters for AI Agents

AI agents (Claude Code, Cursor, and other agentic systems) are essentially LLMs trying to do control flow.

Think about what an agent does:

- Look at the current state

- Decide what action to take

- Execute the action

- Check if the goal is achieved

- If not, go to step 1

This is a loop. And loops are exactly what transformers struggle with.

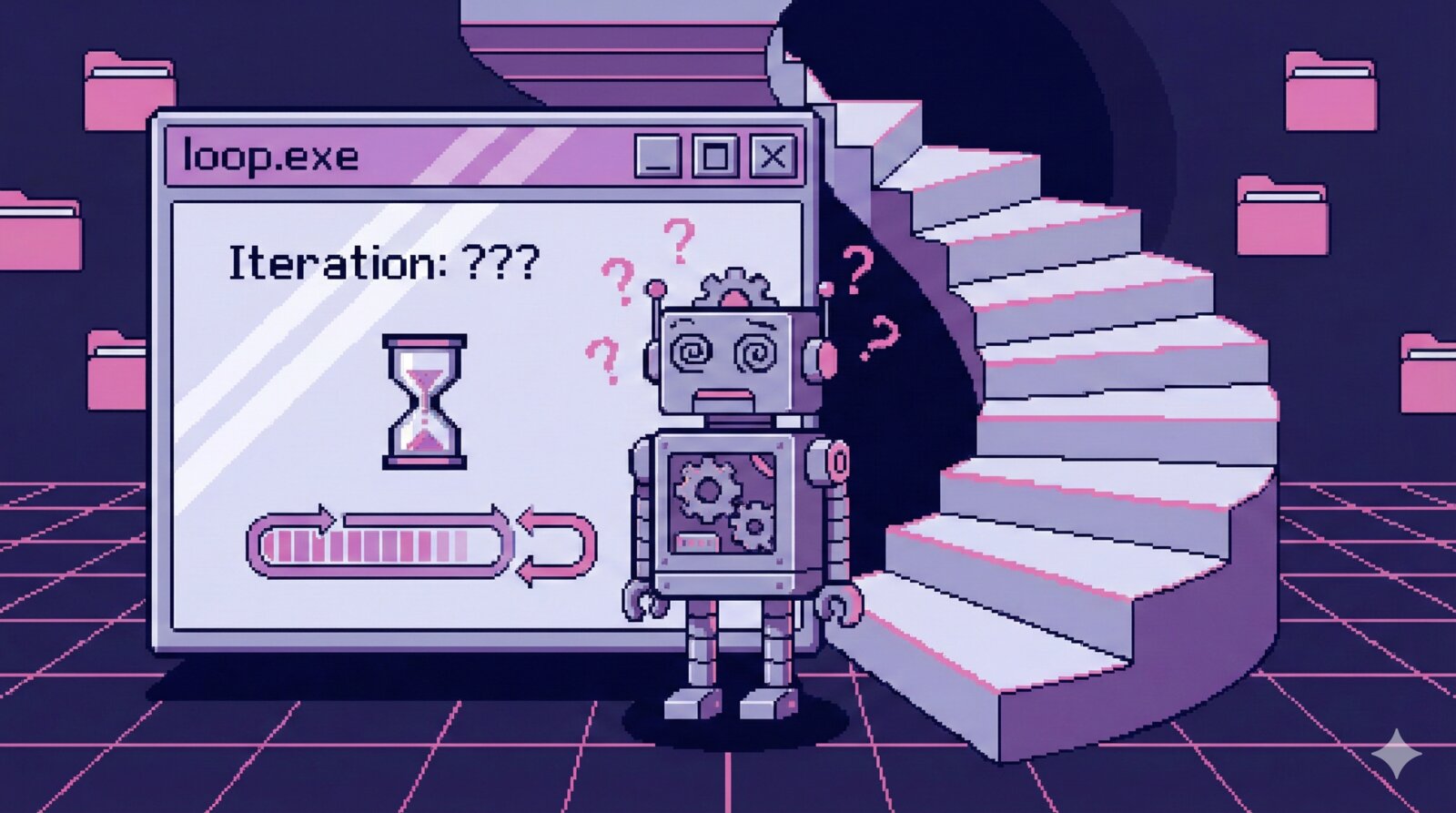

That’s why you see agents:

- Going in circles (loop that doesn’t terminate)

- Missing exit conditions (loop runs too long)

- Giving up too early (loop doesn’t run enough)

- Losing track of progress (state management across iterations)

The 99.7% Problem

The research I referenced showed transformers achieving 99.7% accuracy on certain numerical tasks, specifically tasks that could be solved with pattern matching and a fixed number of reasoning steps.

But change the problem slightly (make it require a variable number of steps based on the input) and accuracy collapses.

It’s not that the model can’t do the reasoning. It’s that it can’t reliably determine how much reasoning to do.

What This Means Practically

When working with AI agents, keep in mind:

Tasks that work well:

- Fixed-step procedures

- Pattern matching

- Transformation with known structure

- Analysis with bounded scope

Tasks that struggle:

- “Keep going until done”

- Variable-depth recursion

- Finding something that might not exist

- Complex state management across many steps

Mitigations That Help

There are ways to work around the control flow problem:

Bounded iterations. Instead of “fix all bugs,” try “check for and fix up to 3 bugs.”

Explicit state management. Give the agent a scratchpad and ask it to track progress explicitly.

Checkpoints. Break long tasks into phases with human verification between them.

Structured output. Ask for explicit loop tracking: “iteration 1 of N, current state: X, next action: Y.”

Early termination signals. Give clear criteria for when to stop.

The Architectural Limitation

This isn’t something that can be fixed with more training or better prompts. It’s a fundamental architectural limitation of transformers.

Some research directions that might help:

- Looped transformers that can iterate internally

- Hybrid architectures with explicit control flow primitives

- Tool use for algorithmic tasks (let the LLM decide WHAT to do, let code handle HOW MANY times)

But for now, the limitation is real, and understanding it helps you work with AI agents more effectively.

The Practical Takeaway

When an AI agent fails, ask yourself: “Is this fundamentally a control flow problem?”

If the task requires:

- Determining how many iterations to perform

- Managing state across an unknown number of steps

- Searching until a condition is met

Then you’re fighting the architecture. Consider restructuring the task, adding human checkpoints, or using tools for the parts that require reliable iteration.

The AI isn’t stupid. It’s just not built for loops.

Understanding this distinction will save you hours of frustration and help you design better human-AI workflows.

More Thoughts

AI Agents as Force Multipliers: Why Developers Are More Valuable Than Ever

Sep 18, 2025 · 2 min read

AI coding agents amplify expertise rather than replace it. The fundamentals of software engineering matter more than …

Read moreAI Adoption in Legal Tech: What I Learned at a Legal Conference

Jul 18, 2025 · 3 min read

I walked into a legal conference ready for some serious eye-rolls about AI. Boy, was I wrong. The legal industry is …

Read morePanel of Experts Prompting: Using AI Like a Boardroom, Not an Intern

May 25, 2025 · 2 min read

Most people prompt ChatGPT like it's an intern. Here's a technique to use it like a boardroom of experts, and get …

Read moreDjango in the LLM-Native Era: Why It's the Perfect AI-Friendly Framework

May 18, 2025 · 3 min read

Django's conventions, batteries-included approach, and 20-year codebase make it ideal for AI-assisted development. …

Read more